Insider threats pose a significant risk to an organization’s security and data, and traditional methods of detecting these threats have proved inadequate. This has led to a growing interest in the use of artificial intelligence (AI) to detect insider threats. AI can analyse large amounts of data from various sources, such as employee activity logs and network traffic, to identify unusual patterns or behaviours that may indicate a potential threat. In this essay, we will explore the use of AI to detect insider threats, its potential benefits and drawbacks, and the challenges organizations face in implementing this technology.

AI and Insider Threat Detection

AI involves the use of algorithms and computer programs to analyse large sets of data and identify patterns that would be difficult or impossible to detect by humans alone. In the context of insider threat detection, AI can be used to analyse data from various sources, such as employee activity logs, network traffic, and security cameras, to identify suspicious behaviour that may indicate an insider threat.

Machine learning algorithms, which are trained on large data sets to identify patterns of behaviour that are associated with insider threats, are commonly used for insider threat detection. These algorithms can then be used to identify similar patterns in new data sets, allowing for the detection of potential threats in real-time. Deep learning, which involves the use of neural networks to analyse complex data sets and identify patterns that would be difficult for humans to detect, is another type of AI algorithm that can be used for insider threat detection.

Benefits of Using AI for Insider Threat Detection

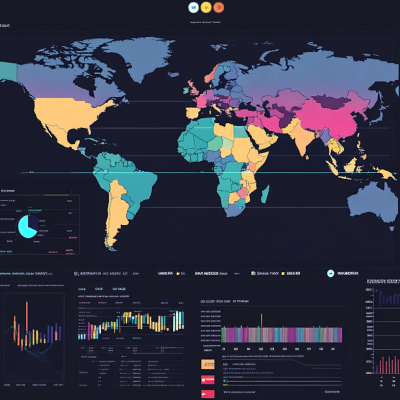

One of the most significant benefits of using AI for insider threat detection is that it can analyse large amounts of data much more quickly and accurately than humans can. This allows for the detection of potential threats in real-time, rather than after the fact. AI can also identify patterns of behaviour that would be difficult for humans to detect, such as an employee accessing sensitive data outside of normal working hours or downloading large amounts of data to an external device.

AI can also help to reduce false positives, which are a common problem with traditional methods of detecting insider threats. Audits and background checks can generate many false positives, which can be time-consuming and expensive to investigate. AI algorithms, on the other hand, can be trained to identify patterns of behaviour that are highly indicative of a potential threat, reducing the number of false positives.

Another benefit of using AI for insider threat detection is that it can monitor employee behaviour in real-time, allowing for the detection of potential threats as they occur. This can help organizations to respond quickly to potential threats and mitigate any damage that may occur. In addition, AI can be used to identify trends and patterns in employee behaviour over time, allowing organizations to identify potential threats before they occur.

Drawbacks of Using AI for Insider Threat Detection

While there are many potential benefits to using AI for insider threat detection, there are also several drawbacks that must be considered. One of the most significant drawbacks is the potential for false negatives. AI algorithms can only detect patterns of behaviour that they have been trained to recognize. If a new type of insider threat emerges that has not been previously identified, the AI may not be able to detect it. This means that organisations must also rely on other methods of detecting insider threats, such as audits and background checks, to ensure that all potential threats are identified.

Another potential drawback of using AI for insider threat detection is the potential for bias. AI algorithms can be trained on biased data sets, which can result in biased decision-making. This can be particularly problematic when it comes to insider threat detection, as it may lead to the identification of innocent employees as potential threats. Organizations must take steps to ensure that their AI algorithms are not biased and that they do not discriminate against certain groups of employees.

One of the most effective ways to mitigate insider threat, is through monitoring and detection of unsanctioned or undesirable staff activity. It’s not as difficult as you might think. Using a platform such as ShadowSight, which uses AI, will supercharge your work.

Strategic Advisor, ShadowSight

Who is Christopher McNaughton

Chris is a proficient problem solver with a strategic aptitude for anticipating and addressing potential business issues, particularly in areas such as Insider Threat, Data Governance, Digital Forensics, Workplace Investigations, and Cyber Security. He thrives on turning intricate challenges into opportunities for increased efficiency, offering pragmatic solutions derived from a practical and realistic approach.

Starting his career as a law enforcement Detective, Chris transitioned to multinational organisations where he specialised and excelled in Cyber Security, proving his authority in the field. Even under demanding circumstances, his commitment to delivering exceptional results remains unwavering, underpinned by his extraordinary ability to understand both cyber and business problems swiftly, along with a deep emphasis on active listening.

What is ShadowSight

ShadowSight is an innovative insider risk staff monitoring tool that proactively guards your business against internal threats and safeguards vital data from unauthorised access and malicious activities. We offer a seamless integration with your current systems, boosting regulatory compliance while providing unparalleled visibility into non-compliant activities to reinforce a secure digital environment. By prioritising actionable intelligence, ShadowSight not only mitigates insider threats but also fosters a culture of proactive risk management, significantly simplifying your compliance process without the overwhelming burden of false positives.